Audio system set-up

In Linux embedded system world, ALSA (Advanced Linux Sound Architecture) is the lowest level way to provide an API for sound card device drivers. However, its limitation is to only allow one process to open a device. This is why we need sound servers, which take care of handling sound streams between applications. They are the simplest way to allow concurrent access for multiple processes to a sound device. The two most well-known and used servers are JACK (JACK Audio Connection Kit) and PulseAudio. While JACK provides a simple API and focuses on deterministic latency, PulseAudio aims to be more universal and easy to use.

In 2015, Wim Taymans, a software engineer at Red Hat, started work on PipeWire.

PipeWire is an open-source project for handling multimedia pipelines in Linux system. The aim of this project is to provide a low-latency, graph-based processing engine on top of audio and video devices. In this series of two articles, we will use PipeWire to replace Jack and PulseAudio in a demo audio embedded project and see how its performance compares to other sound servers. This first part will present the Linux audio stack and the two most well-known audio servers, JACK and PulseAudio.

Demo audio system presentation

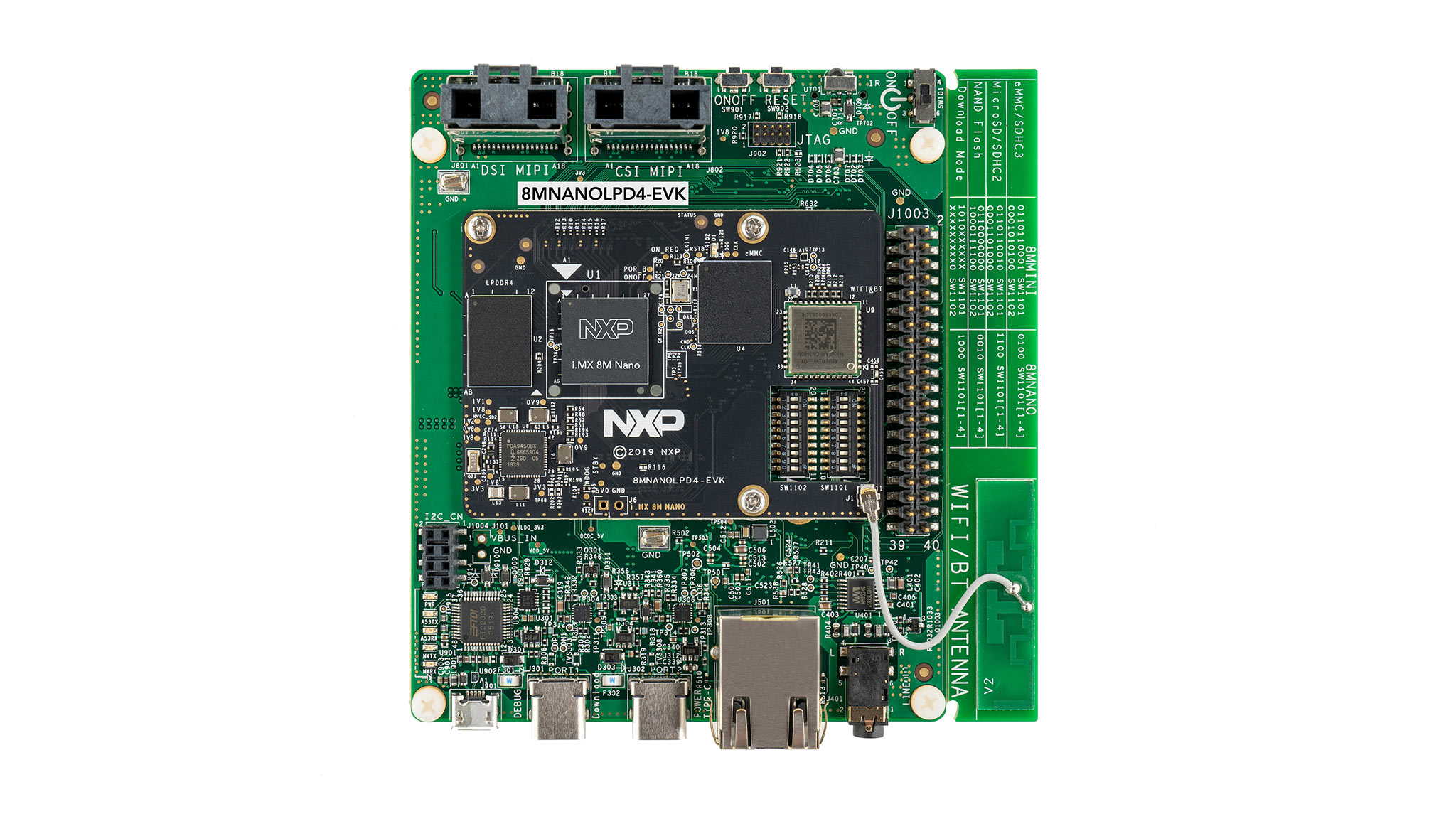

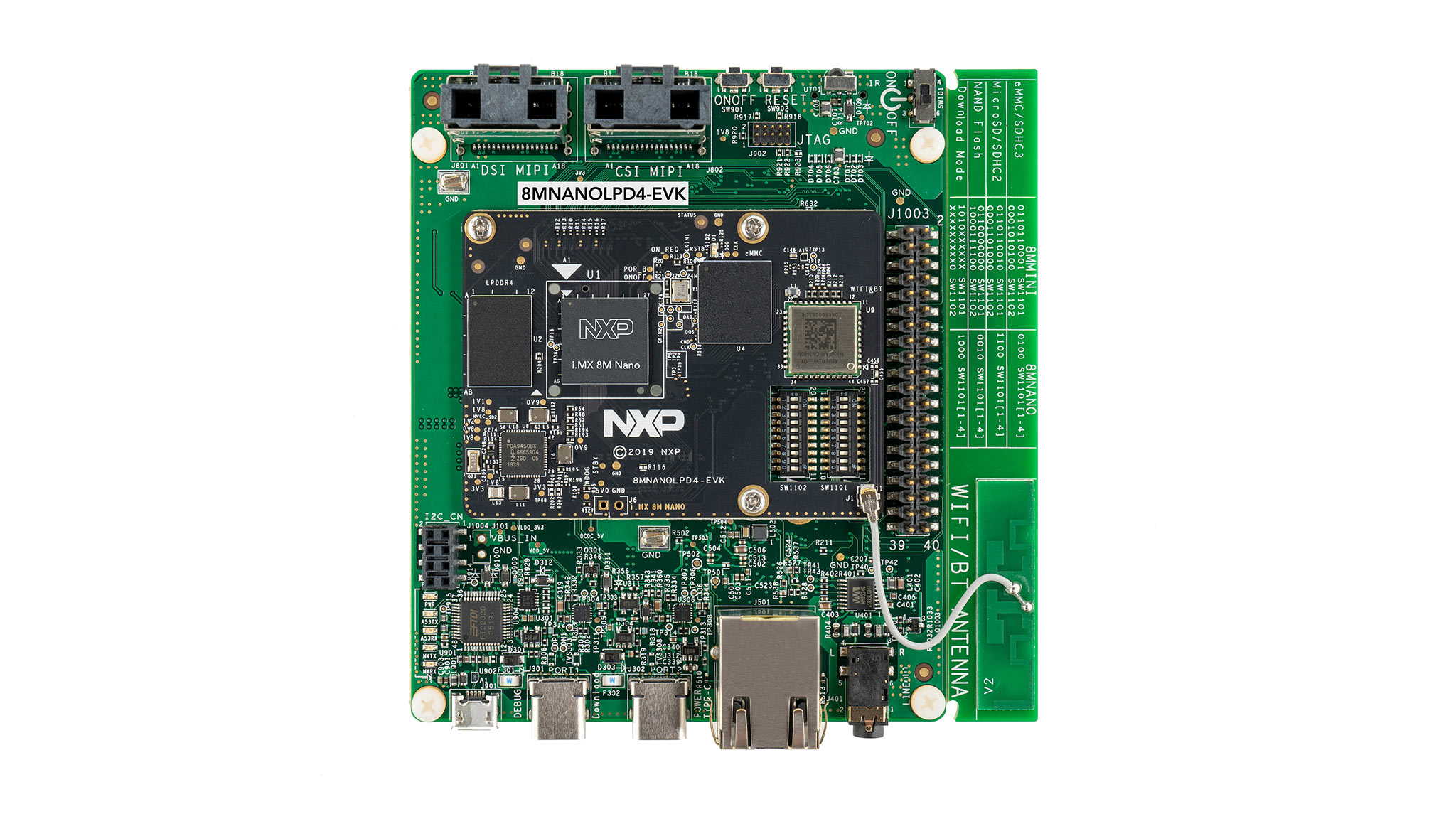

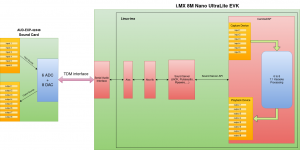

In this project, we use an i.MX 8M Nano UltraLite EVK with an AUD-EXP-42448 Audio Card. This audio card is based on Cirrus Logic CS42448 CODEC and offers six input channels (connected as 2 stereo jacks + 2 mono jacks) and eight output channels (connected as 4 stereo jacks).

An external multi-port USB sound card is used to generate test audio samples from computer and to measure the latency.

We used the Yocto project to build a Linux distribution with linux-imx kernel and a custom device tree for the i.MX 8M Nano UltraLite EVK card to add support for the AUD-EXP-42448 Audio Card.

Three different sound servers (JACK, PulseAudio, Pipewire) are installed on different images to be run with an audio application, CamillaDSP.

CamillaDSP is a tool to create audio processing pipelines. It is written in RUST and supported on Linux, macOS and Windows. Alsa, PulseAudio, Jack are currently supported as backends for both capture and playback. This polyvalence makes it a wonderful tool to test the different sound servers.

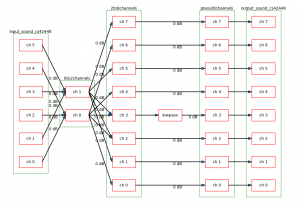

In this study, we use CamillaDSP to design a simple 7.1 karaoke use case.

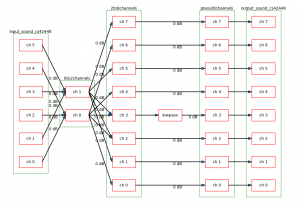

6 to 8 7.1 Karaoke CamillaDSP configuration, generated by CamillaDSP

Two stereo and two mono inputs are mixed to have only one stereo stream, and routed to four stereo outputs. To simulate a sub-woofer output channel, the channel 3 audio samples are passed through a low-pass filter at 120 Hz.

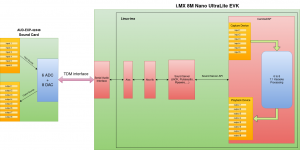

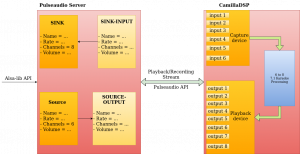

The following diagram shows how our audio system works.

The general work-flow is standard: an audio application (CamillaDSP) runs and communicates with the sound server (JACK, PulseAudio, PipeWire, …) through its API. The sound server gets/puts audio samples from/to ALSA using Alsa-lib API.

At the kernel level, the audio samples are sent using the sound card machine driver, which in turn sends audio data through the SAI driver, and controls the cs42448 chipset through its codec driver. In this study, the sound server is changed to compare the performance of each solution, in terms of CPU consumption and latency.

Sound servers

Let’s first talk about two most well-known servers, JACK and PulseAudio!

JACK

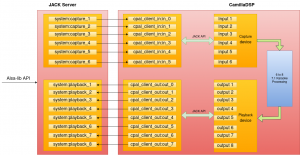

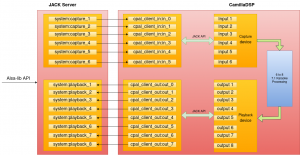

At initialization, the JACK server opens the specified ALSA device and creates the associated capture and playback audio ports. These ports represent every playback and capture channel of the hardware device.

An application that uses the JACK API is called a JACK client. With JACK API, the clients can open an external client session with a JACK server and declare their own input/output ports. For example, as we can see in the diagram, CamillaDSP declares its own input/output ports named cpal_client_in/_out, which can be connected to the system or other clients ports. The audio samples transmission between ports is handled by the JACK server. The client registers a processing call back, which is called by the JACK server at each period.

PulseAudio

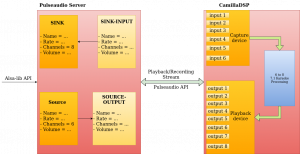

PulseAudio creates a sink or a source for every detected output or input device from Alsa, which means they will use all the attributes of each device, include all channels, samples rates, and other attributes. They are the units which produce and expend audio samples.

When an application wants to send/receive audio to a sink or from a source, it has to open a playback/recording stream, which also contains a profile with name, samples rate, channel map, etc. These streams are called the sink-outputs and the source-inputs, which are the intermediate steps to transfer audio samples between application and PulseAudio sinks/sources.

Testing methodology

To test the CPU usage of application and server, we use htop to get a quick view. We also used perf to verify the analyze the CPU consumption of the different components.

To test the latency of our system, we measure the whole system latency with an oscilloscope. The next diagram explains the latency testing chain.

We use Audacity on a computer to generate a rhythm track and send it out to the USB external sound card.

With the USB external sound card, we have 2 identical sound outputs (Speaker and Headphone in this case). One will be connected to our audio system and the other will be plugged to the channel 1 of the oscilloscope. One of the outputs of our audio system is connected to channel 2 of the oscilloscope. This way, we can measure the latency across the whole system.

What will we have in the next article ?

In this article, we presented a demonstration audio embedded project and the audio stack in an embedded Linux system. The sound server is an extremely important component and determines most of the system’s performance. We also showed the main operation of two popular sound servers, JACK and PulseAudio. In the next episode, we will present PipeWire and how it can compare with both sound servers in terms of CPU and latency: click here to read our second article.